In the above image, you can easily see that the file is merged successfully in our output.txt file.The mc license commands work with cluster registration for MinIO SUBNET. Now let’s see whether the file get merged in output.txt file or not. Hdfs dfs -getmerge -nl /Hadoop_File/file1.txt /Hadoop_File/file2.txt /home/dikshant/Documents/hadoop_file/output.txt In this case we have merge it to /hadoop_file folder inside my /Documents folder. this will add a new line between the content of these n files. Syntax: hdfs dfs -getmerge -nl /path1 /path2. Step 2: Now it’s time to use -getmerge command to merge these files into a single output file in our local file system for that follow the below procedure. Hdfs dfs -copyFromLocal /home/dikshant/Documents/hadoop_file/file1.txt /home/dikshant/Documents/hadoop_file/file2.txt /Hadoop_Fileīelow is the Image showing this file inside my /Hadoop_File directory in HDFS.

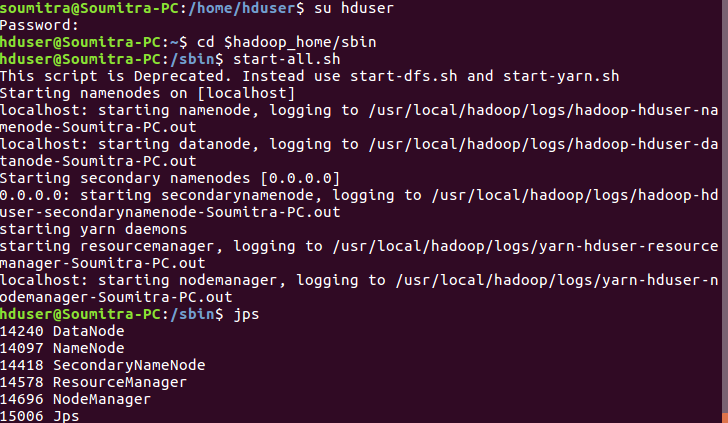

Making Hadoop_Files directory in our HDFS hdfs dfs -mkdir /Hadoop_File.If you don’t know how to make the directory and copy files to HDFS then follow below command to do so. In this case, we have copied both of these files inside my HDFS in Hadoop_File folder. Here I am having a folder, temp_folder with three files, 2 being empty and 1 file is nonempty Hdfs dfs -rm $(hdfs dfs -ls -R /user/A/ | grep -v "^d" | awk '') Supposing we have a folder with multiple empty files and some non-empty files and if we want to delete the files that are empty, we can use the below command: Here my merged_files is storing my output result. You can view your output using the following command.

It is going to be created automatically to store your output when you are using the above command. The merged_files folder need not be created manually.

Hadoop fs -cat /user/edureka_425640/merge_files/* | hadoop fs -put - /user/edureka_425640/merged_file s Then you can execute the following command to the merge the files and store it in hdfs: Here, I am having a folder namely merge_files which contains the following files that I want to merge In order to merge two or more files into one single file and store it in hdfs, you need to have a folder in the hdfs path containing the files that you want to merge. does your client console have a good speed connection to the datanodes? This certainly is the least effort on your part, and will probably complete quicker than a MR job to do the same (as everything has to go to one machine anyway, so why not your local console?) via the FsShell commands, depending on your network topology - i.e.a custom map reduce job with a single reducer and a custom mapper reducer that retains the file ordering (remember each line will be sorted by the keys, so you key will need to be some combination of the input file name and line number, and the value will be the line itself).

Unforntunatley there is no efficient way to merge multiple files into one (unless you want to look into Hadoop 'appending', but in your version of hadoop, that is disabled by default and potentially buggy), without having to copy the files to one machine and then back into HDFS, whether you do that in getmerge also outputs to the local file system, not HDFS There are ways you can do this, using the hadoop fs -put command with the source argument being a hypen:īin/hadoop fs -cat /user/username/folder/csv1.csv /user/username/folder/csv2.csv | hadoop fs -put - /user/username/folder/output.csv The error relates to you trying to re-direct the standard output of the command back to HDFS.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed